Physically-Based Rendering

We try to generate synthetic images undistinguible from reality by accurately modeling the underlying physics ruling light transport. For that, we propose new numerical methods for efficient and robust light transport simulation, and combine them with novel models simulating light-matter interactions.

One of the long-standing goals of Computer Graphics is to generate images that mimic the reality as accurately as possible. This has a vast range of applications, including entertainment, architecture, or engineering. However, rendering faithful images requires simulating the complex physical phenomena of light interactions, by solving large multidimensional integrals. Our goal in this field is to develop new robust and efficient numerical techniques for light transport simulation, as well as modeling in-depth the complex interactions between light and matter.

Publications

Virtualized Point Cloud Rendering

José Antonio Collado, Alfonso López Ruiz, Juan Manuel Jurado, Juan Roberto Jiménez

IEEE Transactions on Visualization and Computer Graphics

Abstract: Remote sensing technologies, such as LiDAR, produce billions of points that commonly exceed the storage capacity of the GPU, restricting their processing and rendering. Level of detail (LoD) techniques have been widely investigated, but building the LoD structures is also time-consuming. This study proposes a GPU-driven culling system focused on determining the number of points visible in every frame. It can manipulate point clouds of any arbitrary size while maintaining a low memory footprint in both the CPU and GPU. Instead of organizing point clouds into hierarchical data structures, these are split into groups of points sorted using the Hilbert encoding. This alternative alleviates the occurrence of anomalous groups found in Morton curves. Instead of keeping the entire point cloud in the GPU, points are transferred on demand to ensure real-time capability. Accordingly, our solution can manipulate huge point clouds even in commodity hardware with low memory capacities. Moreover, hole filling is implemented to cover the gaps derived from insufficient density and our LoD system. Our proposal was evaluated with point clouds of up to 18 billion points, achieving an average of 80 frames per second (FPS) without perceptible quality loss. Relaxing memory constraints further enhances visual quality while maintaining an interactive frame rate. We assessed our method on real-world data, comparing it against three state-of-the-art methods, demonstrating its ability to handle significantly larger point clouds.

Procedural Multiscale Geometry Modeling using Implicit Surfaces

Bojja Venu, Adam Bosak, Juan Raúl Padrón Griffe

Computer Graphics Forum (Pacific Graphics 2025)

Abstract: Materials exhibit geometric structures across mesoscopic to microscopic scales, influencing macroscale properties such as appearance, mechanical strength, and thermal behavior. Capturing and modeling these multiscale structures is challenging but essential for computer graphics, engineering, and materials science. We present a framework inspired by hypertexture methods, using implicit surfaces and sphere tracing to synthesize multiscale structures on the fly without precomputation. This framework models volumetric materials with particulate, fibrous, porous, and laminar structures, allowing control over size, shape, density, distribution, and orientation. We enhance structural diversity by superimposing implicit periodic functions while improving computational efficiency. The framework also supports spatially varying particulate media, particle agglomeration, and piling on convex and concave structures, such as rock formations (mesoscale), without explicit simulation. We demonstrate its potential in the appearance modeling of volumetric materials and investigate how spatially varying properties affect the perceived macroscale appearance. As a proof of concept, we show that microstructures created by our framework can be reconstructed from image and distance values defined by implicit surfaces, using both first-order and gradient-free optimization methods.

Polarimetric BSSRDF Acquisition of Dynamic Faces

Hyunho Ha, Inseung Hwang, Nestor Monzon, Jaemin Cho, Donggun Kim, Seung-Hwan Baek, Adolfo Muñoz, Diego Gutierrez, Min H. Kim

ACM Transactions on Graphics (Proc. SIGGRAPH Asia 2024)

Abstract: Acquisition and modeling of polarized light reflection and scattering help reveal the shape, structure, and physical characteristics of an object, which is increasingly important in computer graphics. However, current polarimetric acquisition systems are limited to static and opaque objects. Human faces, on the other hand, present a particularly difficult challenge, given their complex structure and reflectance properties, the strong presence of spatially-varying subsurface scattering, and their dynamic nature. We present a new polarimetric acquisition method for dynamic human faces, which focuses on capturing spatially varying appearance and precise geometry, across a wide spectrum of skin tones and facial expressions. It includes both single and heterogeneous subsurface scattering, index of refraction, and specular roughness and intensity, among other parameters, while revealing biophysically-based components such as inner- and outer-layer hemoglobin, eumelanin and pheomelanin. Our method leverages such components' unique multispectral absorption profiles to quantify their concentrations, which in turn inform our model about the complex interactions occurring within the skin layers. To our knowledge, our work is the first to simultaneously acquire polarimetric and spectral reflectance information alongside biophysically-based skin parameters and geometry of dynamic human faces. Moreover, our polarimetric skin model integrates seamlessly into various rendering pipelines.

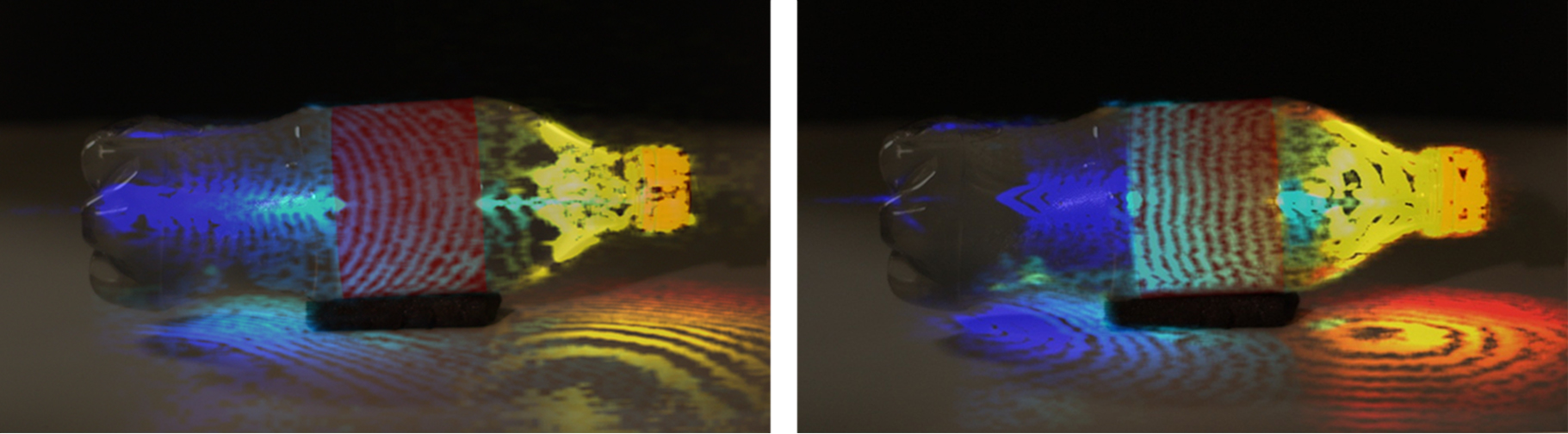

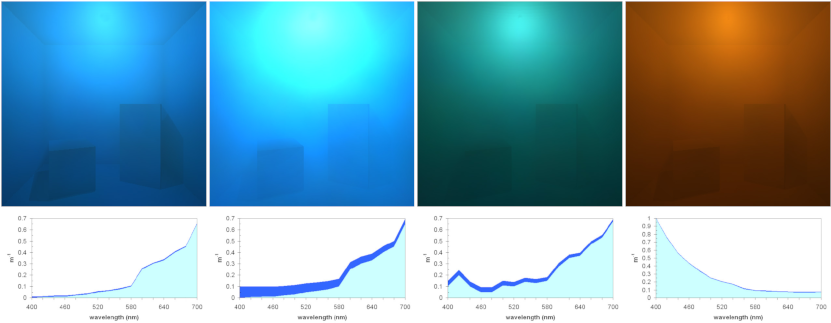

Real-Time Underwater Spectral Rendering

Nestor Monzon, Diego Gutierrez, Derya Akkaynak, Adolfo Muñoz

Computer Graphics Forum (Eurographics 2024)

Abstract: The light field in an underwater environment is characterized by complex multiple scattering interactions and wavelength-dependent attenuation, requiring significant computational resources for the simulation of underwater scenes. We present a novel approach that makes it possible to simulate multi-spectral underwater scenes, in a physically-based manner, in real time. Our key observation is the following: In the vertical direction, the steady decay in irradiance as a function of depth is characterized by the diffuse downwelling attenuation coefficient, which oceanographers routinely measure for different types of waters. We rely on a database of such real-world measurements to obtain an analytical approximation to the Radiative Transfer Equation, allowing for real-time spectral rendering with results comparable to Monte Carlo ground-truth references, in a fraction of the time. We show results simulating underwater appearance for the different optical water types, including volumetric shadows and dynamic, spatially varying lighting near the water surface.

Practical Appearance Model for Foundation Cosmetics

Dario Lanza, Juan Raúl Padrón-Griffe, Alina Pranovich, Adolfo Muñoz, Jeppe Frisvad, Adrian Jarabo

Computer Graphics Forum (EGSR 2024)

Abstract: Cosmetic products have found their place in various aspects of human life, yet their digital appearance reproduction has received little attention. We present an appearance model for cosmetics, in particular for foundation layers, that reproduces a range of existing appearances of foundation cosmetics: from a glossy to a matte to an almost velvety look. Our model is a multilayered BSDF that reproduces the stacking of multiple layers of cosmetics. Inspired by the microscopic particulates used in cosmetics, we model each individual layer as a stochastic participating medium with two types of scatterers that mimic the most prominent visual features of cosmetics: spherical diffusers, resulting in a uniform distribution of radiance; and platelets, responsible for the glossy look of certain cosmetics. We implement our model on top of the position-free Monte Carlo framework, that allows us to include multiple scattering. We validate our model against measured reflectance data, and demonstrate the versatility and expressiveness of our model by thoroughly exploring the range of appearances that it can produce.

A Surface-based Appearance Model for Pennaceous Feathers

Juan Raúl Padrón Griffe, Dario Lanza, Adrian Jarabo, Adolfo Muñoz

Computer Graphics Forum (Pacific Graphics 2024)

Abstract: The appearance of a real-world feather results from the complex interaction of light with its multi-scale biological structure, including the central shaft, branching barbs, and interlocking barbules on those barbs. In this work, we propose a practical surface-based appearance model for feathers. We represent the far-field appearance of feathers using a BSDF that implicitly represents the light scattering from the main biological structures of a feather, such as the shaft, barb and barbules. Our model accounts for the particular characteristics of feather barbs such as the non-cylindrical cross-sections and the scattering media via a numerically-based BCSDF. To model the relative visibility between barbs and barbules, we derive a masking term for the differential projected areas of the different components of the feather’s microgeometry, which allows us to analytically compute the masking between barbs and barbules. As opposed to previous works, our model uses a lightweight representation of the geometry based on a 2D texture, and does not require explicitly representing the barbs as curves. We show the flexibility and potential of our appearance model approach to represent the most important visual features of several pennaceous feathers.

A Biologically-Inspired Appearance Model for Snake Skin

Juan Padron, Diego Bielsa, Adrian Jarabo, Adolfo Muñoz

Spanish Computer Graphics Conference (CEIG), 2023

Abstract: Simulating the light transport on biological tissues is a longstanding challenge, given its complex multilayered structure. In biology, one of the most remarkable and studied examples of tissues are the scales that cover the skin of reptiles, which present a combination of photonic structures and pigmentation. This is, however, a somewhat ignored problem in computer graphics. In this work, we propose a multilayered appearance model based on the anatomy of the snake skin. Some snakes are known for their striking, highly iridescent scales resulting from light interference. We model snake skin as a two-layered reflectance function: The top layer is a thin layer resulting on a specular iridescent reflection, while the bottom layer is a diffuse highly-absorbing layer, that results into a dark diffuse appearance that maximizes the iridescent color of the skin. We demonstrate our layered material on a wide range of appearances, and show that our model is able to qualitatively match the appearance of snake skin.

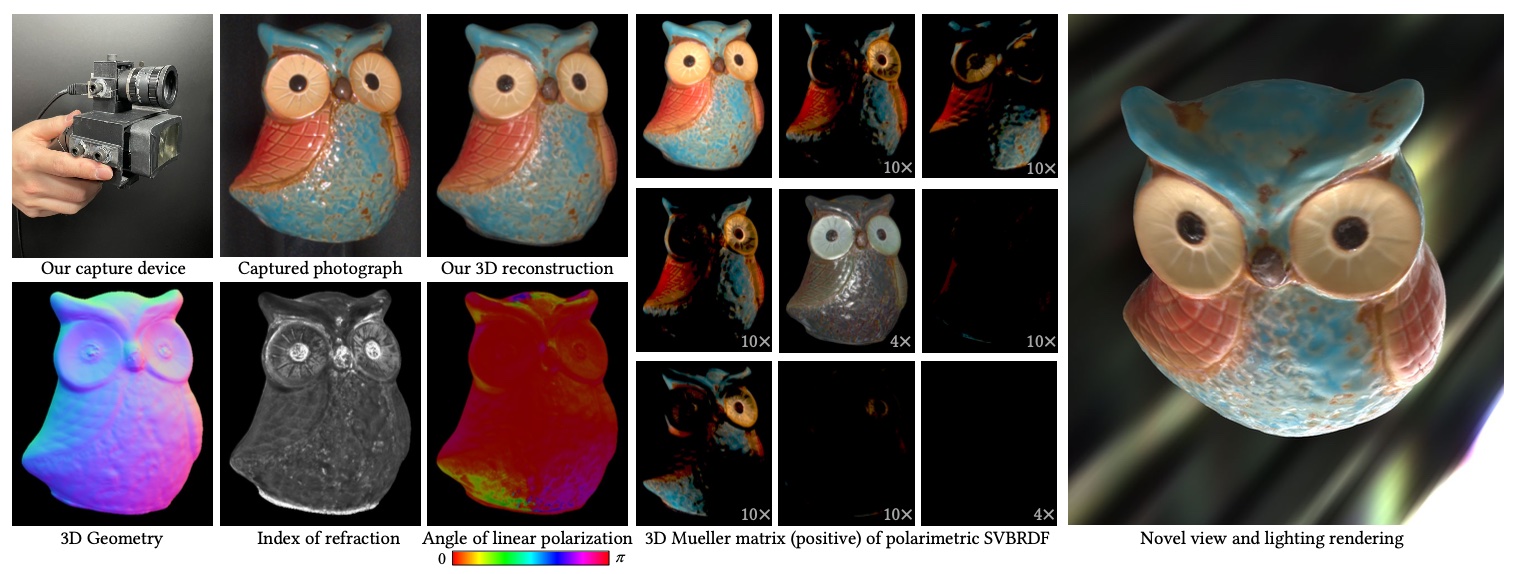

Sparse Ellipsometry: Portable Acquisition of Polarimetric SVBRDF and Shape with Unstructured Flash Photography

Inseung Hwang, Daniel S. Jeon, Adolfo Muñoz, Diego Gutierrez, Xin Tong, Min H. Kim

ACM Transactions on Graphics (SIGGRAPH 2022)

Abstract: Ellipsometry techniques allow to measure polarization information of materials, requiring precise rotations of optical components with different configurations of lights and sensors. This results in cumbersome capture devices, carefully calibrated in lab conditions, and in very long acquisition times, usually in the order of a few days per object. Recent techniques allow to capture polarimetric spatially-varying reflectance information, but limited to a single view, or to cover all view directions, but limited to spherical objects made of a single homogeneous material.We present sparse ellipsometry, a portable polarimetric acquisition method that captures both polarimetric SVBRDF and 3D shape simultaneously. Our handheld device consists of off-the-shelf, fixed optical components. Instead of days, the total acquisition time varies between twenty and thirty minutes per object. We develop a complete polarimetric SVBRDF model that includes diffuse and specular components, as well as single scattering, and devise a novel polarimetric inverse rendering algorithm with data augmentation of specular reflection samples via generative modeling. Our results show a strong agreement with a recent ground-truth dataset of captured polarimetric BRDFs of real-world objects.

A Learned Radiance-Field Representation for Complex Luminaires

Jorge Condor, Adrian Jarabo

Eurographics Symposium on Rendering (EGSR), 2022

Abstract: We propose an efficient method for rendering complex luminaires using a high quality octree-based representation of the luminaire emission. Complex luminaires are a particularly challenging problem in rendering, due to their caustic light paths inside the luminaire. We reduce the geometric complexity of luminaires by using a simple proxy geometry, and encode the visuallycomplex emitted light field by using a neural radiance field. We tackle the multiple challenges of using NeRFs for representing luminaires, including their high dynamic range, high-frequency content and null-emission areas, by proposing a specialized loss function. For rendering, we distill our luminaires' NeRF into a plenoctree, which we can be easily integrated into traditional rendering systems. Our approach allows for speed-ups of up to 2 orders of magnitude in scenes containing complex luminaires introducing minimal error.

Beyond Mie Theory: Systematic Computation of Bulk Scattering Parameters based on Microphysical Wave Optics

Yu Guo, Adrian Jarabo, Shuang Zhao

ACM Transactions on Graphics, Vol. 40(6) (SIGGRAPH Asia 2021)

Abstract: Light scattering in participating media and translucent materials is typically modeled using the radiative transfer theory. Under the assumption of independent scattering between particles, it utilizes several bulk scattering parameters to statistically characterize light-matter interactions at the macroscale. To calculate these parameters based on microscale material properties, the Lorenz-Mie theory has been considered the gold standard. In this paper, we present a generalized framework capable of systematically and rigorously computing bulk scattering parameters beyond the far-field assumption of Lorenz-Mie theory. Our technique accounts for microscale wave-optics effects such as diffraction and interference as well as interactions between nearby particles. Our framework is general, can be plugged in any renderer supporting Lorenz-Mie scattering, and allows arbitrary packing rates and particles correlation; we demonstrate this generality by computing bulk scattering parameters for a wide range of materials, including anisotropic and correlated media.

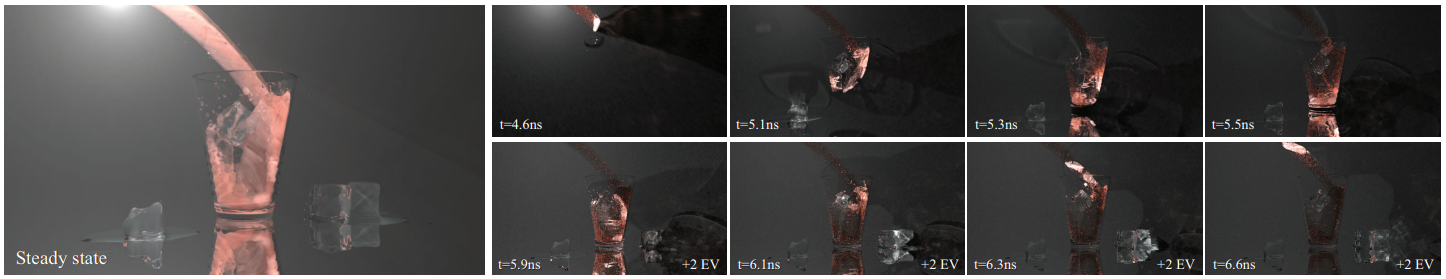

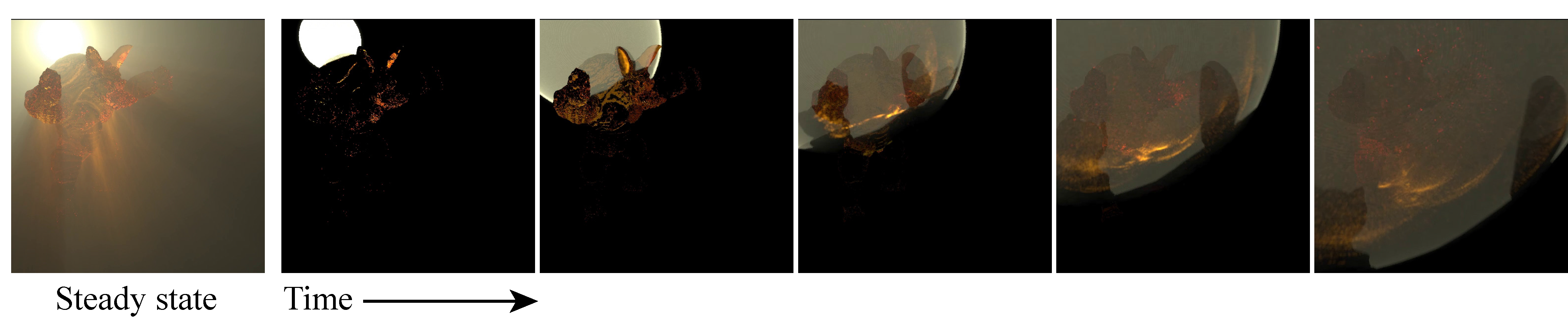

Differentiable Transient Rendering

Shinyoung Yi, Donggun Kim, Kiseok Choi, Adrian Jarabo, Diego Gutierrez, Min H. Kim

ACM Transactions on Graphics, Vol. 40(6) (SIGGRAPH Asia 2021)

Abstract: Recent differentiable rendering techniques have become key tools to tackle many inverse problems in graphics and vision. Existing models, however, assume steady-state light transport, i.e., infinite speed of light. While this is a safe assumption for many applications, recent advances in ultrafast imaging leverage the wealth of information that can be extracted from the exact time of flight of light. In this context, physically-based transient rendering allows to efficiently simulate and analyze light transport considering that the speed of light is indeed finite. In this paper, we introduce a novel differentiable transient rendering framework, to help bring the potential of differentiable approaches into the transient regime. To differentiate the transient path integral we need to take into account that scattering events at path vertices are no longer independent; instead, tracking the time of flight of light requires treating such scattering events at path vertices jointly as a multidimensional, evolving manifold. We thus turn to the generalized transport theorem, and introduce a novel correlated importance term, which links the time-integrated contribution of a path to its light throughput, and allows us to handle discontinuities in the light and sensor functions. Last, we present results in several challenging scenarios where the time of flight of light plays an important role such as optimizing indices of refraction, non-line-of-sight tracking with nonplanar relay walls, and non-line-of-sight tracking around two corners.

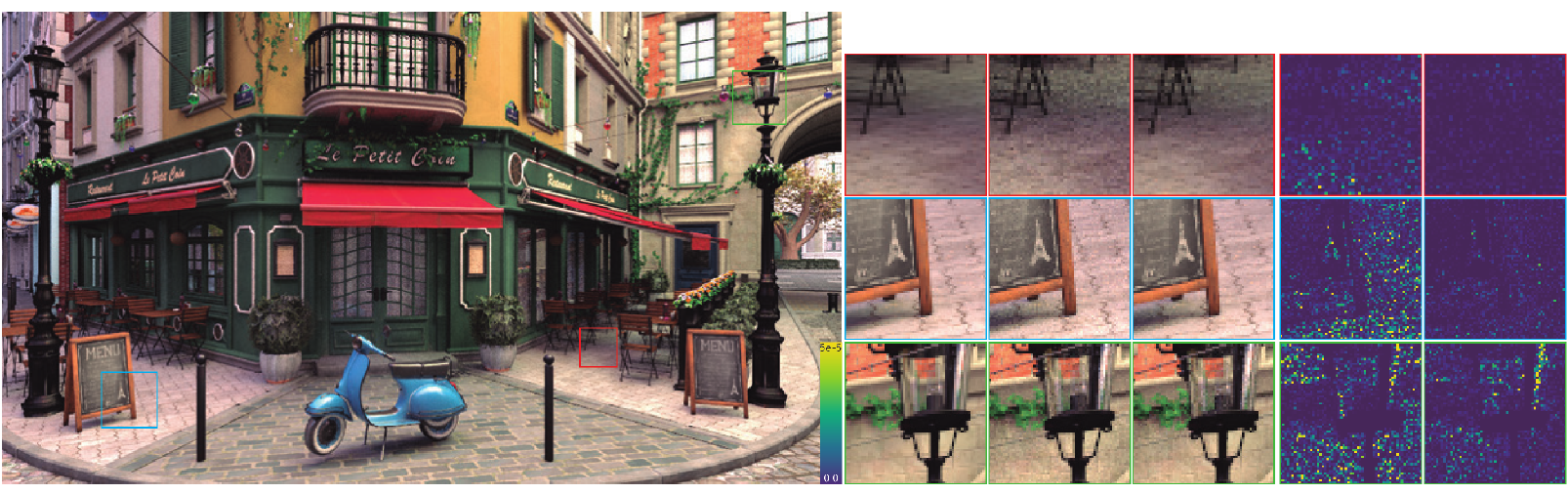

Primary-Space Adaptive Control Variates using Piecewise-Polynomial Approximations

Miguel Crespo, Adrian Jarabo, Adolfo Muñoz

ACM Transactions on Graphics, Vol. 40(3) (Presented at SIGGRAPH 2021)

Abstract: We present an unbiased numerical integration algorithm that handles both low-frequency regions and high frequency details of multidimensional integrals. It combines quadrature and Monte Carlo integration, by using a quadrature-base approximation as a control variate of the signal. We adaptively build the control variate constructed as a piecewise polynomial, which can be analytically integrated, and accurately reconstructs the low frequency regions of the integrand. We then recover the high-frequency details missed by the control variate by using Monte Carlo integration of the residual. Our work leverages importance sampling techniques by working in primary space, allowing the combination of multiple mappings; this enables multiple importance sampling in quadrature-based integration. Our algorithm is generic, and can be applied to any complex multidimensional integral. We demonstrate its effectiveness with four applications with low dimensionality: transmittance estimation in heterogeneous participating media, low-order scattering in homogeneous media, direct illumination computation, and rendering of distributed effects. Finally, we show how our technique is extensible to integrands of higher dimensionality, by computing the control variate on Monte Carlo estimates of the high-dimensional signal, and accounting for such additional dimensionality on the residual as well. In all cases, we show accurate results and faster convergence compared to previous approaches.

A General Framework for Pearlescent Materials

Ibón Guillén, Julio Marco, Diego Gutierrez, Wenzel Jakob, Adrian Jarabo

ACM Transactions on Graphics, Vol. 39(6) (SIGGRAPH Asia 2020)

Abstract: The unique and visually mesmerizing appearance of pearlescent materials has made them an indispensable ingredient in a diverse array of applications including packaging, ceramics, printing, and cosmetics. In contrast to their natural counterparts, such synthetic examples of pearlescence are created by dispersing microscopic interference pigments within a dielectric resin. The resulting space of materials comprises an enormous range of different phenomena ranging from smooth lustrous appearance reminiscent of pearl to highly directionalmetallic gloss, along with a gradual change in color that depends on the angle of observation and illumination. All of these properties arise due to a complex optical process involving multiple scattering from platelets characterized by wave-optical interference. This article introduces a flexible model for simulating the optics of such pearlescent 3D microstructures. Following a thorough review of the properties of currently used pigments and manufacturing-related effects that influence pearlescence, we propose a new model which expands the range of appearance that can be represented, and closely reproduces the behavior of measured materials, as we show in our comparisons. Using our model, we conduct a systematic study of the parameter space and its relationship to different aspects of pearlescent appearance. We observe that several previously ignored parameters have a substantial impact on the material's optical behavior, including the multi-layered nature of modern interference pigments, correlations in the orientation of pigment particles, and variability in their properties (e.g.~thickness). The utility of a general model for pearlescence extends far beyond computer graphics: inverse and differentiable approaches to rendering are increasingly used to disentangle the physics of scattering from real-world observations. Our approach could inform such reconstructions to enable the predictive design of tailored pearlescent materials.

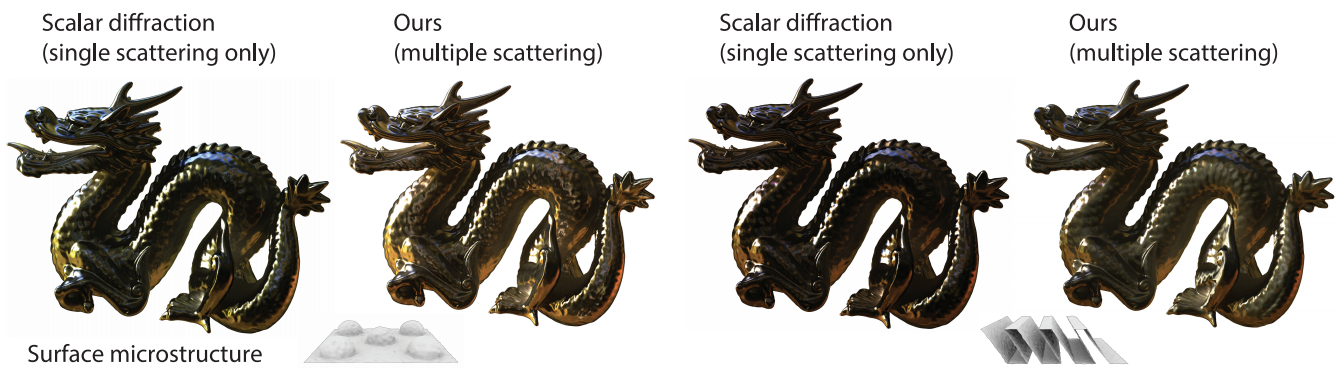

Computing the bidirectional scattering of a microstructure using scalar diffraction theory and path tracing

Viggo Falster, Adrian Jarabo, Jeppe Revall Frisvad

Computer Graphics Forum, Vol. 39(7) (Pacific Graphics 2020)

Abstract: Most models for bidirectional surface scattering by arbitrary explicitly defined microgeometry are either based on geometric optics and include multiple scattering but no diffraction effects or based on wave optics and include diffraction but no multiple scattering effects. The few exceptions to this tendency are based on rigorous solution of Maxwell’s equations and are computationally intractable for surface microgeometries that are tens or hundreds of microns wide. We set up a measurement equation for combining results from single scattering scalar diffraction theory with multiple scattering geometric optics using Monte Carlo integration. Since we consider an arbitrary surface microgeometry, our method enables us to compute expected bidirectional scattering of the metasurfaces with increasingly smaller details seen more and more often in production. In addition, we can take a measured microstructure as input and, for example, compute the difference in bidirectional scattering between a desired surface and a produced surface. In effect, our model can account for both diffraction colors due to wavelength-sized features in the microgeometry and brightening due to multiple scattering. We include scalar diffraction for refraction, and we verify that our model is reasonable by comparing with the rigorous solution for a microsurface with half ellipsoids.

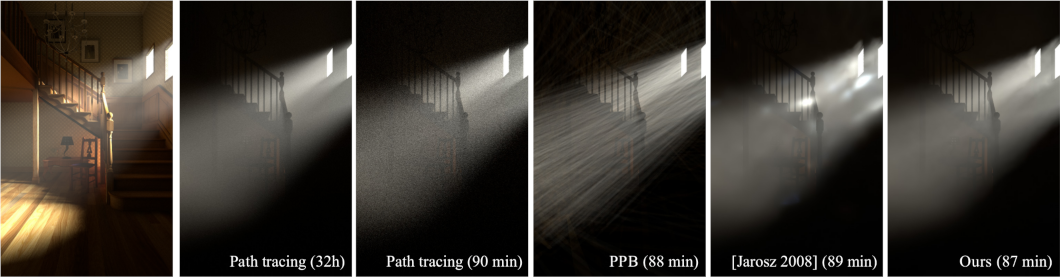

Progressive Transient Photon Beams

Julio Marco, Ibón Guillén, Wojciech Jarosz, Diego Gutierrez, Adrian Jarabo

Computer Graphics Forum, Vol. 38(6), 2019

Abstract: In this work we introduce a novel algorithm for transient rendering in participating media. Our method is consistent, robust, and is able to generate animations of time-resolved light transport featuring complex caustic light paths in media. We base our method on the observation that the spatial continuity provides an increased coverage of the temporal domain, and generalize photon beams to transient-state. We extend stead-state photon beam radiance estimates to include the temporal domain. Then, we develop a progressive variant of our approach which provably converges to the correct solution using finite memory by averaging independent realizations of the estimates with progressively reduced kernel bandwidths. We derive the optimal convergence rates accounting for space and time kernels, and demonstrate our method against previous consistent transient rendering methods for participating media.

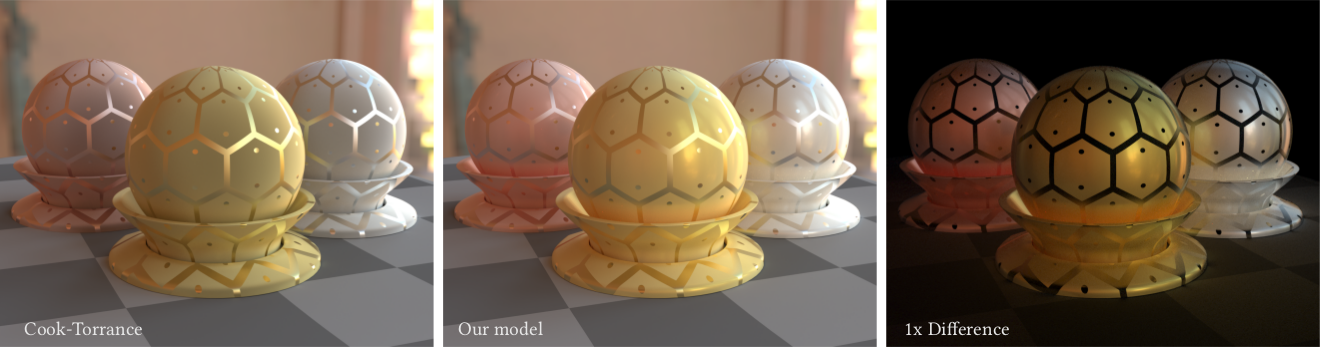

Practical Multiple Scattering for Rough Surfaces

Joo Ho Lee, Adrian Jarabo, Daniel S. Jeon, Diego Gutierrez, Min H. Kim

ACM Transactions on Graphics, Vol. 37(6) (SIGGRAPH Asia 2018)

Abstract: Microfacet theory concisely models light transport over rough surfaces. Specular reflection is the result of single mirror reflection on each facet, while exact computation of multiple scattering is either neglected, or modeled using costly importance sampling techniques. Practical but accurate simulation of multiple scattering in microfacet theory thus remains an open challenge. In this work, we revisit the traditional V-groove cavity model to find out an analytical, cost-effective solution for multiple scattering in rough surfaces. Our kaleidoscopic model is made up of both real and virtual V-grooves, and allows us to calculate higher-order scattering in the microfacets in an analytical fashion. We then extend our model to include nonsymmetric grooves, allowing for additional degrees of freedom on the surface geometry, improving multiple reflections at grazing angles with backward compatibility to traditional normal distribution functions. We validate our model against ground truth Monte Carlo simulations, to evaluate its accuracy, and demonstrate its flexibility rendering anisotropy and textured materials. Our model is analytical, does not introduce significant cost and variance, can be seamless integrated in any rendering engine, preserves reciprocity and energy conservation, and is suitable for bidirectional methods.

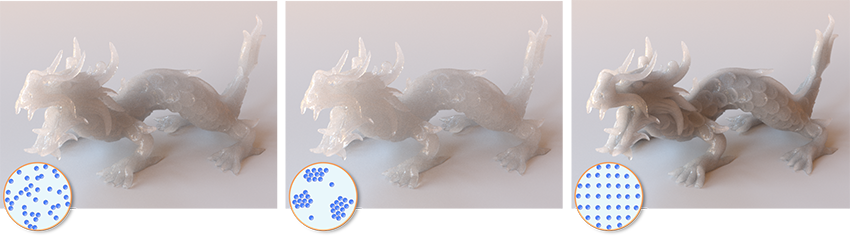

A Radiative Transfer Framework for Spatially-Correlated Materials

Adrian Jarabo, Carlos Aliaga, Diego Gutierrez

ACM Transactions on Graphics, Vol. 37(4) (SIGGRAPH 2018)

Abstract: We introduce a non-exponential radiative framework that takes into account the local spatial correlation of scattering particles in a medium. Most previous works in graphics have ignored this, assuming uncorrelated media with a uniform, random local distribution of particles. However, positive and negative correlation lead to slower- and faster-than-exponential attenuation respectively, which cannot be predicted by the Beer-Lambert law. As our results show, this has a major effect on extinction, and thus appearance. From recent advances in neutron transport, we first introduce our Extended Generalized Boltzmann Equation, and develop a general framework for light transport in correlated media. We lift the limitations of the original formulation, including an analysis of the boundary conditions, and present a model suitable for computer graphics, based on optical properties of the media and statistical distributions of scatterers. In addition, we present an analytic expression for transmittance in the case of positive correlation, and show how to incorporate it efficiently into a Monte Carlo renderer. We show results with a wide range of both positive and negative correlation, and demonstrate the differences compared to classic light transport.

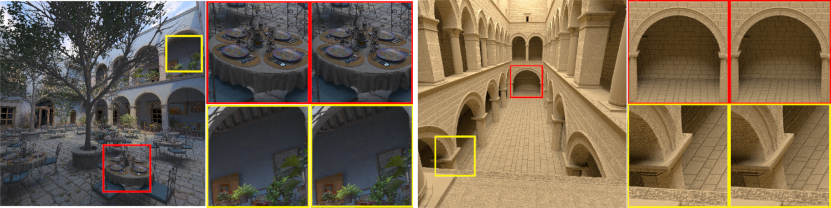

Second-Order Occlusion-Aware Volumetric Radiance Caching

Julio Marco, Adrian Jarabo, Wojciech Jarosz, Diego Gutierrez

ACM Transactions on Graphics, Vol. 37(2) (SIGGRAPH 2018)

Abstract: We present a second-order gradient analysis of light transport in participating media and use this to develop an improved radiance caching algorithm for volumetric light transport. We adaptively sample and interpolate radiance from sparse points in the medium using a second-order Hessian-based error metric to determine when interpolation is appropriate. We derive our metric from each point’s incoming light field, computed by using a proxy triangulation-based representation of the radiance reflected by the surrounding medium and geometry. We use this representation to efficiently compute the first- and second-order derivatives of the radiance at the cache points while accounting for occlusion changes. We also propose a self-contained two-dimensional model for light transport in media and use it to validate and analyze our approach, demonstrating that our method outperforms previous radiance caching algorithms both in terms of accurate derivative estimates and final radiance extrapolation. We generalize these findings to practical three-dimensional scenarios, where we show improved results while reducing computation time by up to 30% compared to previous work.

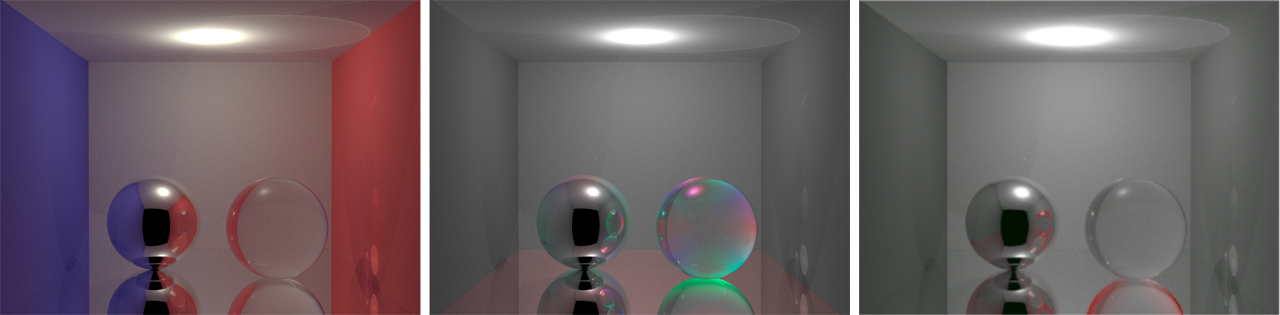

Bidirectional Rendering of Vector Light Transport

Adrian Jarabo, Victor Arellano

Computer Graphics Forum, Vol. 37(6), 2018

Abstract: On the foundations of many rendering algorithm is the symmetry between the path traversed by light, and its adjoint path starting from the camera. However, several effects, including polarization or fluorescence, break that symmetry, and are defined only on the direction of light. This reduces the applicability of bidirectional methods, that exploit this symmetry for simulating effectively light transport. In this work we focus on how to include these non-symmetric effects within a bidirectional rendering algorithm. We generalize the path integral to support the constraints imposed by non-symmetric light transport. Based on this theoretical framework, we propose modifications on two bidirectional methods, namely bidirectional path tracing and photon mapping, extending them to support polarization and fluorescence, in both steady and transient state.

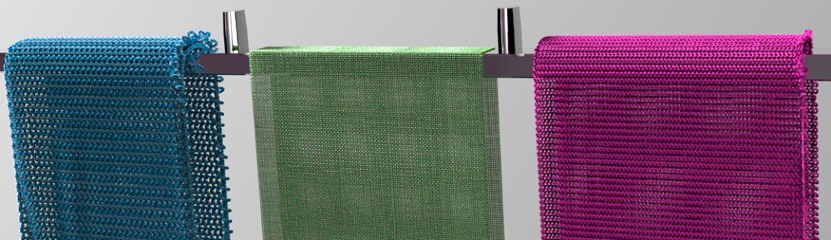

An Appearance Model for Textile Fibers

Carlos Aliaga, Carlos Castillo, Diego Gutierrez, Miguel A. Otaduy, Jorge Lopez-Moreno, Adrian Jarabo

Computer Graphics Forum, Vol. 36(4) (EGSR 2017)

Abstract: Accurately modeling how light interacts with cloth is challenging, due to the volumetric nature of cloth appearance and its multiscale structure, where microstructures play a major role in the overall appearance at higher scales. Recently, significant effort has been put on developing better microscopic models for cloth structure, which have allowed rendering fabrics with unprecedented fidelity. However, these highly-detailed representations still make severe simplifications on the scattering by individual fibers forming the cloth, ignoring the impact of fibers' shape, and avoiding to establish connections between the fibers' appearance and their optical and fabrication parameters. In this work we put our focus in the scattering of individual cloth fibers; we introduce a physically-based scattering model for fibers based on their low-level optical and geometric properties, relying on the extensive textile literature for accurate data. We demonstrate that scattering from cloth fibers exhibits much more complexity than current fiber models, showing important differences between cloth type, even in averaged conditions due to longer views. Our model can be plugged in any framework for cloth rendering, matches scattering measurements from real yarns, and is based on actual parameters used in the textile industry, allowing predictive bottom-up definition of cloth appearance.

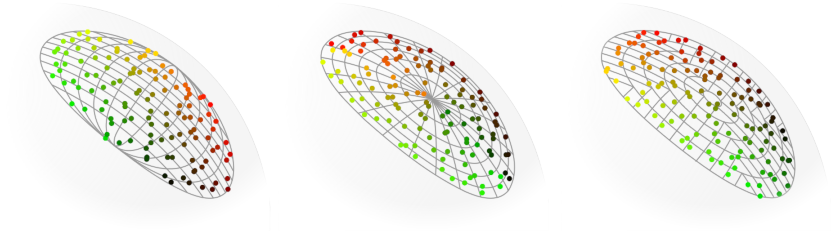

Area-Preserving Parameterizations for Spherical Ellipses

Ibón Guillén, Carlos Ureña, Alan King, Marcos Fajardo, Iliyan Georgiev, Jorge López-Moreno, Adrian Jarabo

Computer Graphics Forum, Vol. 36(4) (EGSR 2017)

Abstract: We present new methods for uniformly sampling the solid angle subtended by a disk. To achieve this, we devise two novel area-preserving mappings from the unit square [0,1] to a spherical ellipse (i.e. the projection of the disk onto the unit sphere). These mappings allow for low-variance stratified sampling of direct illumination from disk-shaped light sources. We discuss how to efficiently incorporate our methods into a production renderer and demonstrate the quality of our maps, showing significantly lower variance than previous work.

Transient Photon Beams

Julio Marco, Wojciech Jarosz, Diego Gutierrez, Adrian Jarabo

Spanish Computer Graphics Conference (CEIG), 2017

Abstract: Recent advances on transient imaging and their applications have opened the necessity of forward models that allow precise generation and analysis of time-resolved light transport data. However, traditional steady-state rendering techniques are not suitable for computing transient light transport due to the aggravation of the inherent Monte Carlo variance over time. These issues are specially problematic in participating media, which demand high number of samples to achieve noise-free solutions. We address this problem by presenting the first photon-based method for transient rendering of participating media that performs density estimations on time-resolved precomputed photon maps. We first introduce the transient integral form of the radiative transfer equation into the computer graphics community, including transient delays on the scattering events. Based on this formulation we leverage the high density and parameterized continuity provided by photon beams algorithms to present a new transient method that allows to significantly mitigate variance and efficiently render participating media effects in transient state.

A Biophysically-Based Model of the Optical Properties of Skin Aging

Jose Iglesias, Carlos Aliaga, Adrian Jarabo and Diego Gutierrez

Computer Graphics Forum, Vol. 34(2) (Eurographics 2015)

Abstract: This paper presents a time-varying, multi-layered biophysically-based model of the optical properties of human skin, suitable for simulating appearance changes due to aging. We have identified the key aspects that cause such changes, both in terms of the structure of skin and its chromophore concentrations, and rely on the extensive medical and optical tissue literature for accurate data. Our model can be expressed in terms of biophysical parameters, optical parameters commonly used in graphics and rendering (such as spectral absorption and scattering coefficients), or more intuitively with higher-level parameters such as age, gender, skin care or skin type. It can be used with any rendering algorithm that uses diffusion profiles, and it allows to automatically simulate different types of skin at different stages of aging, avoiding the need for artistic input or costly capture processes.

Separable Subsurface Scattering

J. Jimenez, K. Zsolnai, A. Jarabo, C. Freude, T. Auzinger, X-C. Wu, J. von der Pahlen, M. Wimmer and D. Gutierrez

Computer Graphics Forum, 2015

Abstract: In this paper we propose two real-time models for simulating subsurface scattering for a large variety of translucent materials, which need under 0.5 milliseconds per frame to execute. This makes them a practical option for realtime production scenarios. Current state-of-the-art, real-time approaches simulate subsurface light transport by approximating the radially symmetric non-separable diffusion kernel with a sum of separable Gaussians, which requires multiple (up to twelve) 1D convolutions. In this work we relax the requirement of radial symmetry to approximate a 2D diffuse reflectance profile by a single separable kernel. We first show that low-rank approximations based on matrix factorization outperform previous approaches, but they still need several passes to get good results. To solve this, we present two different separable models: the first one yields a high-quality diffusion simulation, while the second one offers an attractive trade-off between physical accuracy and artistic control. Both allow rendering subsurface scattering using only two 1D convolutions, reducing both execution time and memory consumption, while delivering results comparable to techniques with higher cost. Using our importance-sampling and jittering strategies, only seven samples per pixel are required. Our methods can be implemented as simple post-processing steps without intrusive changes to existing rendering pipelines.

Bidirectional Clustering for Scalable VPL-based Global Illumination

Adrian Jarabo, Raul Buisan and Diego Gutierrez

CEIG 2015

Abstract: Virtual Point Lights (VPL) methods approximate global illumination (GI) in a scene by using a large number of virtual lights modeling the reflected radiance of a surface. These methods are efficient, and allow computing noise-free images significantly faster that other methods. However, they scale linearly with the number of virtual lights and with the number of pixels to be rendered. Previous approaches improve the scalability of the method by hierarchically evaluating the virtual lights, allowing sublinear performance with respect the lights being evaluated. In this work, we introduce a novel bidirectional clustering approach, by hierarchically evaluating both the virtual lights and the shading points. This allows reusing radiance evaluation between pixels, and obtaining sublinear costs with respect to both lights and camera samples. We demonstrate significantly better performance than state-of-the-art VPL clustering methods with several examples, including high-resolution images, distributed effects, and rendering of light fields.

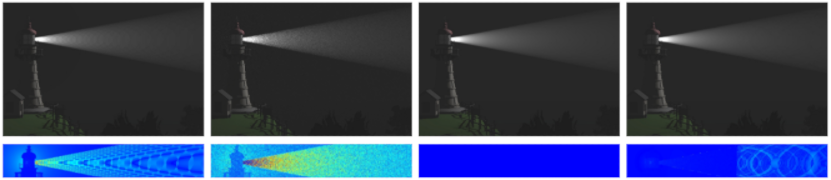

A Framework for Transient Rendering

Adrian Jarabo, Julio Marco, Adolfo Muñoz, Raul Buisan, Wojciech Jarosz and Diego Gutierrez

ACM Transactions on Graphics, Vol.33(6) (SIGGRAPH Asia 2014)

Abstract: Recent advances in ultra-fast imaging have triggered many promising applications in graphics and vision, such as capturing transparent objects, estimating hidden geometry and materials, or visualizing light in motion. There is, however, very little work regarding the effective simulation and analysis of transient light transport, where the speed of light can no longer be considered infinite. We first introduce the transient path integral framework, formally describing light transport in transient state. We then analyze the difficulties arising when considering the light's time-of-flight in the simulation (rendering) of images and videos. We propose a novel density estimation technique that allows reusing sampled paths to reconstruct time-resolved radiance, and devise new sampling strategies that take into account the distribution of radiance along time in participating media. We then efficiently simulate time-resolved phenomena (such as caustic propagation, fluorescence or temporal chromatic dispersion), which can help design future ultra-fast imaging devices using an analysis-by-synthesis approach, as well as to achieve a better understanding of the nature of light transport.

Abstract: Rendering participating media is still a challenging and time consuming task. In such media light interacts at every differential point of its path. Several rendering algorithms are based on ray marching: dividing the path of light into segments and calculating interactions at each of them. In this work, we revisit and analyze ray marching both as a quadrature integrator and as an initial value problem solver, and apply higher order adaptive solvers that ensure several interesting properties, such as faster convergence, adaptiveness to the mathematical definition of light transport and robustness to singularities. We compare several numerical methods, including standard ray marching and Monte Carlo integration, and illustrate the benefits of different solvers for a variety of scenes. Any participating media rendering algorithm that is based on ray marching may benefit from the application of our approach by reducing the number of needed samples (and therefore, rendering time) and increasing accuracy.

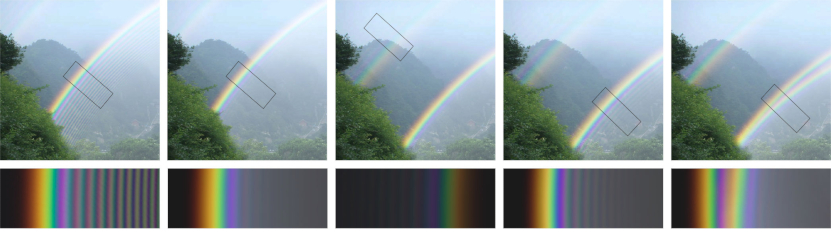

Physically-Based Simulation of Rainbows

Iman Sadeghi, Adolfo Muñoz, Philip Laven, Francisco Seron, Wojciech Jaroz, Diego Gutierrez and Henrik Wann Jensen

ACM Transactions on Graphics, Vol. 31(1) (presented at SIGGRAPH 2012)

Abstract: In this paper we derive a physically-based model for simulating rainbows. Previous techniques for simulating rainbows have used either geometric optics (ray tracing) or Lorenz-Mie theory. Lorenz-Mie theory is by far the most accurate technique as it takes into account optical effects such as dispersion, polarization, interference, and diffraction. These effects are critical for simulating rainbows accurately. However, as Lorenz-Mie theory is restricted to scattering by spherical particles, it cannot be applied to real raindrops which are non-spherical, especially for larger raindrops. We present the first comprehensive technique for simulating the interaction of a wavefront of light with a physically-based water drop shape.Our technique is based on ray tracing extended to account for dispersion, polarization, interference, and diffraction. Our model matches Lorenz-Mie theory for spherical particles, but it also enables the accurate simulation of non-spherical particles. It can simulate many different rainbow phenomena including double rainbows and supernumerary bows. We show how the non-spherical raindrops influence the shape of the rainbows, and we provide a simulation of the rare twinned rainbow, which is believed to be caused by non-spherical water drops.

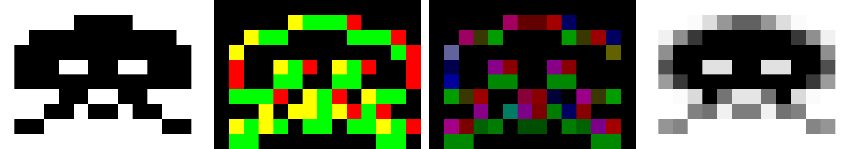

SMAA: Enhanced Subpixel Morphological Antialiasing

Jorge Jimenez, Jose I. Echevarria and Diego Gutierrez

Technical Report RR-2011-05, 2011

Abstract: We present a new image-based, post-processing antialiasing technique, which offers practical solutions to the common, open problems of existing filter-based real-time antialiasing algorithms. Some of the new features include local contrast analysis for more reliable edge detection, and a simple and effective way to handle sharp geometric features and diagonal lines. This, along with our accelerated and accurate pattern classification allows for a better reconstruction of silhouettes. Our method shows for the first time how to combine morphological antialiasing (MLAA) with additional multi/supersampling strategies (MSAA, SSAA) for accurate subpixel features, and how to couple it with temporal reprojection; always preserving the sharpness of the image. All these solutions combine synergies making for a very robust technique, yielding results of better overall quality than previous approaches while more closely converging to MSAA/SSAA references but maintaining extremely fast execution times. Additionally, we propose different presets to better fit the available resources or particular needs of each scenario.

Filtering Approaches for Real-Time Anti-Aliasing

J. Jimenez, D. Gutierrez, J. Yang, A. Reshetov, P. Demoreuille, T. Berghoff, C. Perthuis, H. Yu, M. McGuire, T. Lottes, H. Malan, E. Persson, D. Andreev and T. Sousa

SIGGRAPH 2011 (Course)

Abstract: For more than a decade, Supersample Anti-Aliasing (SSAA) and Multisample Anti-Aliasing (MSAA) have been the gold standard antialiasing solution in games. However, these techniques are not well suited for deferred shading or fixed environments like the current generation of consoles. In the last years, Industry and Academia have begun to explore alternative approaches, where anti-aliasing is performed as a post-processing step. The original, CPU-based Morphological Anti-Aliasing (MLAA) method gave birth to an explosion of real-time anti-aliasing techniques that rival MSAA. This course will cover the most relevant techniques, from the original MLAA to the latest cutting edge advancements.

Convolution-based simulation of homogeneous subsurface scattering

Adolfo Muñoz, Jose I. Echevarria, Francisco Serón and Diego Gutierrez

Computer Graphics Forum, 2011

Abstract: This paper introduces a new method for simulating homogeneous subsurface light transport in translucent objects. Our approach is based on irradiance convolutions over a multi-layered representation of the volume for light transport, which is general enough to obtain plausible depictions of translucent objects based on the diffusion approximation. We aim at providing an efficient physically based algorithm that can apply arbitrary diffusion profiles to general geometries. We obtain accurate results for a wide range of materials, on par with the hierarchical method by Jensen and Buhler.

Practical Morphological Anti-Aliasing

Jorge Jimenez, Belen Masia, Jose I. Echevarria, F. Navarro and Diego Gutierrez

In GPU Pro 2: Advanced Rendering Techniques, 2011

Abstract: Multisample anti-aliasing (MSAA) remains the most extended solution to deal with aliasing, crucial when rendering high quality graphics. Even though it offers superior results in real time, it has a high memory footprint, posing a problem for the current generation of consoles, and it implies a non-negligible time consumption. Further, there are many platforms where MSAA and MRT (multiple render targets, required for fundamental techniques such as deferred shading) cannot coexist. The majority of alternatives to MSAA which have been developed, usually implemented in shader units, cannot compete in quality with MSAA, which remains the gold standard solution. This work introduces an alternative anti-aliasing method offering results whose quality lies between 4x and 8x MSAA at a fraction of its memory and time consumption. Besides, the technique works as a post-process, and can therefore be easily integrated in the rendering pipeline of any game architecture.

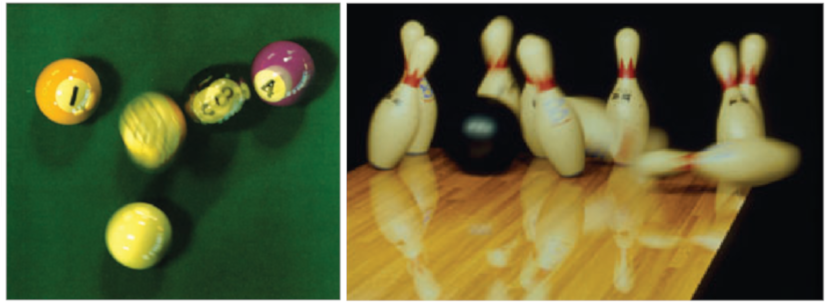

Rendering Motion Blur: State of the Art

Fernando Navarro, Francisco Seron and Diego Gutierrez

Computers Graphics Forum, 2011

Abstract: Motion blur is a fundamental cue in the perception of objects in motion. This phenomenon manifests as a visible trail along the trajectory of the object and is the result of the combination of relative motion and light integration taking place in film and electronic cameras. In this work, we analyse the mechanisms that produce motion blur in recording devices and the methods that can simulate it in computer generated images. Light integration over time is one of the most expensive processes to simulate in high-quality renders, as such, we make an in-depth review of the existing algorithms and we categorize them in the context of a formal model that highlights their differences, strengths and limitations. We finalize this report proposing a number of alternative classifications that will help the reader identify the best technique for a particular scenario.

A Practical Appearance Model for Dynamic Facial Color

Jorge Jimenez, Timothy Scully, Nuno Barbosa, Craig Donner, Xenxo Alvarez, Teresa Vieira, Paul Matts, Veronica Orvalho, Diego Gutierrez and Tim Weyrich

ACM Transactions on Graphics, Vol. 29(5) (SIGGRAPH Asia 2010)

Abstract: Facial appearance depends on both the physical and physiological state of the skin. As people move, talk, undergo stress, and change expression, skin appearance is in constant flux. One of the key indicators of these changes is the color of skin. Skin color is determined by scattering and absorption of light within the skin layers, caused mostly by concentrations of two chromophores, melanin and hemoglobin. In this paper we present a real-time dynamic appearance model of skin built from in vivo measurements of melanin and hemoglobin concentrations. We demonstrate an efficient implementation of our method, and show that it adds negligible overhead to existing animation and rendering pipelines. Additionally, we develop a realistic, intuitive, and automatic control for skin color, which we term a skin appearance rig. This rig can easily be coupled with a traditional geometric facial animation rig. We demonstrate our method by augmenting digital facial performance with realistic appearance changes.

Visualizing Underwater Ocean Optics

Diego Gutierrez, Francisco Seron, Oscar Anson and Adolfo Muñoz

Computer Graphics Forum (Eurographics 2008), Vol. 27(2), pp. 547-556

Abstract: Simulating the in-water ocean light field is a daunting task. Ocean waters are one of the richest participating media, where light interacts not only with water molecules, but with suspended particles and organic matter as well. The concentration of each constituent greatly affects these interactions, resulting in very different hues. Inelastic scattering events such as fluorescence or Raman scattering imply energy transfers that are usually neglected in the simulations. Our contributions in this paper are a bio-optical model of ocean waters suitable for computer graph- ics simulations, along with an improved method to obtain an accurate solution of the in-water light field based on radiative transfer theory. The method provides a link between the inherent optical properties that define the medium and its apparent optical properties, which describe how it looks. The bio-optical model of the ocean uses published data from oceanography studies. For inelastic scattering we compute all frequency changes at higher and lower energy values, based on the spectral quantum efficiency function of the medium. The results shown prove the usability of the system as a predictive rendering algorithm. Areas of application for this research span from underwater imagery to remote sensing; the resolution method is general enough to be usable in any type of participating medium simulation.

Simulation of Atmospheric Phenomena

Diego Gutierrez, Adolfo Muñoz, Oscar Anson and Francisco Serón.

Computers & Graphics, Vol. 20(6), pp. 994-1010

Abstract: This paper presents a physically based simulation of atmospheric phenomena. It takes into account the physics of non-homogeneous media in which the index of refraction varies continuously, creating curved light paths. As opposed to previous research on this area, we solve the physically based differential equation that describes the trajectory of light. We develop an accurate expression of the index of refraction in the atmosphere as a function of wavelength, based on real measured data. We also describe our atmosphere profile manager, which lets us mimic the initial conditions of real-world scenes for our simulations. The method is validated both visually (by comparing the images with the real pictures) and numerically (with the extensive literature from other areas of research such as optics or meteorology). The phenomena simulated include the inferior and superior mirages, the Fata Morgana, the Novaya–Zemlya, the Viking’s end of the world, the distortions caused by heat waves and the green flash.

Efficient selective rendering of participating media

Oscar Anson, Veronica Sundstedt, Diego Gutierrez and Alan Chalmers

ACM Symposium on Applied Perception in Graphics and Visualization

Abstract: Realistic image synthesis is the process of computing photorealistic images which are perceptually and measurably indistinguishable from real-world images. In order to obtain high fidelity rendered images it is required that the physical processes of materials andthe behavior of light are accurately modelled and simulated. Mostcomputer graphics algorithms assume that light passes freely between surfaces within an environment. However, in many applications, ranging from evaluation of exit signs in smoke filled rooms to design of efficient headlamps for foggy driving, realistic modelling of light propagation and scattering is required. The computational requirements for calculating the interaction of light with such participating media are substantial. This process can take many minutes or even hours. Many times rendering efforts are spent on computing parts of the scene that will not be perceived by the viewer.In this paper we present a novel perceptual strategy for physically-based rendering of participating media. By using a combination of a saliency map with our new extinction map (X-map) we can significantly reduce rendering times for inhomogenous media. We also validate the visual quality of the resulting images using two objective difference metrics and a subjective psychophysical experiment. Although the average pixel errors of these metric are all less than 1%, the experiment using human observers indicate that these degradation in quality is still noticeable in certain scenes, unlike previous work has suggested.

Non-Linear Volume Photon Mapping

Diego Gutierrez, Adolfo Muñoz, Oscar Anson and Francisco Seron

Eurographics Symposium on Rendering 2005, pp. 291-300

Abstract: This paper describes a novel extension of the photon mapping algorithm, capable of handling both volume multiple inelastic scattering and curved light paths simultaneously. The extension is based on the Full Radiative Transfer Equation (FRTE) and Fermat's law, and yields physically accurate, high-dynamic data than can be used for image generation or for other simulation purposes, such as driving simulators, underwater vision or lighting studies in architecture. Photons are traced into the participating medium with a varying index of refraction, and their curved trajectories followed (curved paths are the cause of certain atmospheric effects such as mirages or rippling desert images). Every time a photon is absorbed, a Russian roulette algorithm based on the quantum efficiency of the medium determines whether the inelastic scattering event takes place (causing volume fluorescence). The simulation of both underwater and atmospheric effects is shown, providing a global illumination solution without the restrictions of previous approaches.