Large-scale dataset of synthetic images with dense segmentation annotations at texture and subtexture levels.

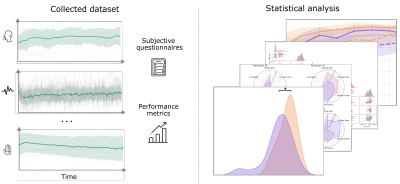

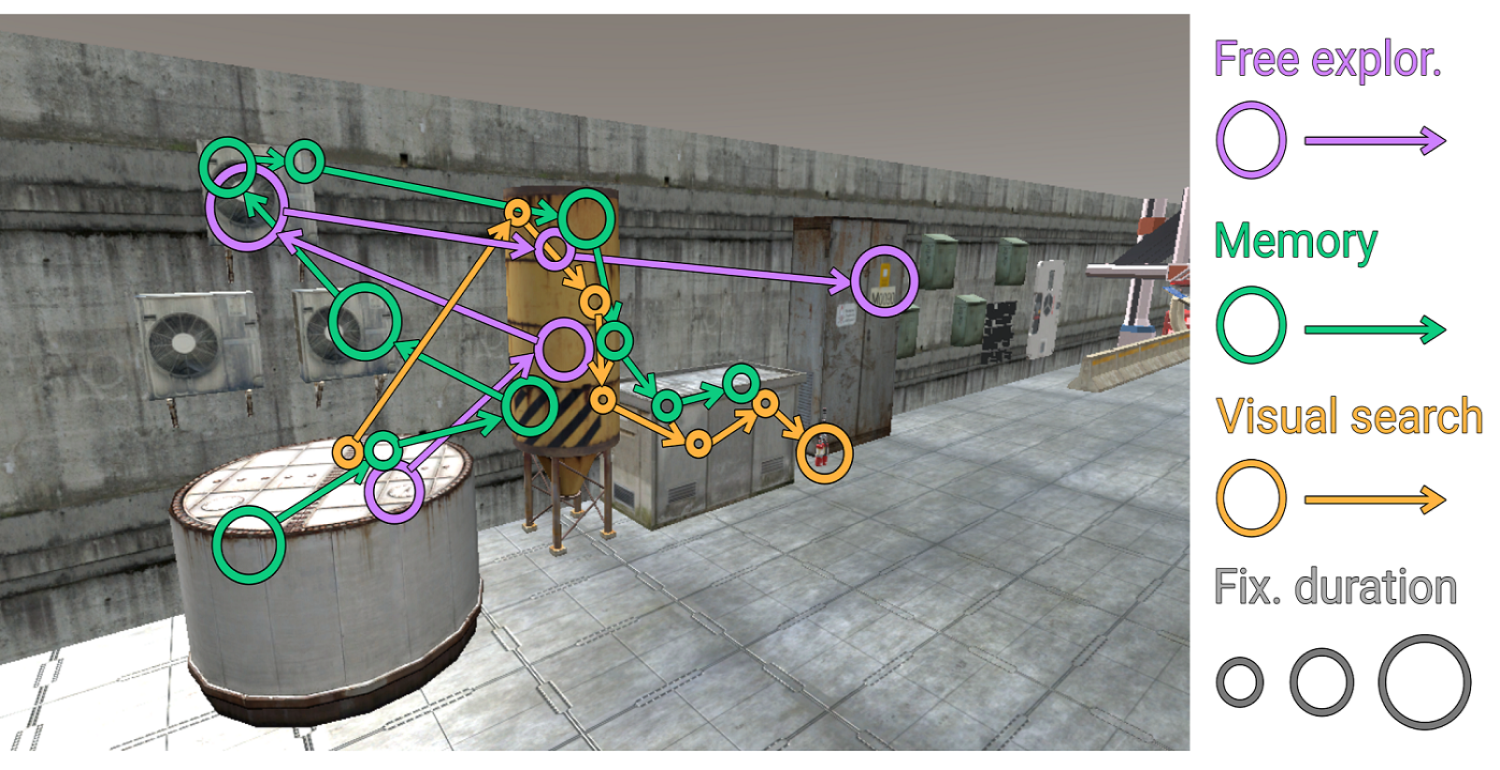

A dataset of physiological and behavioral signals captured in a VR visual-search experience under different levels of multisensory cognitive load and movement, including ECG, EDA, PPG, respiration, eye tracking, performance, and subjective questionnaires from 36 participants.

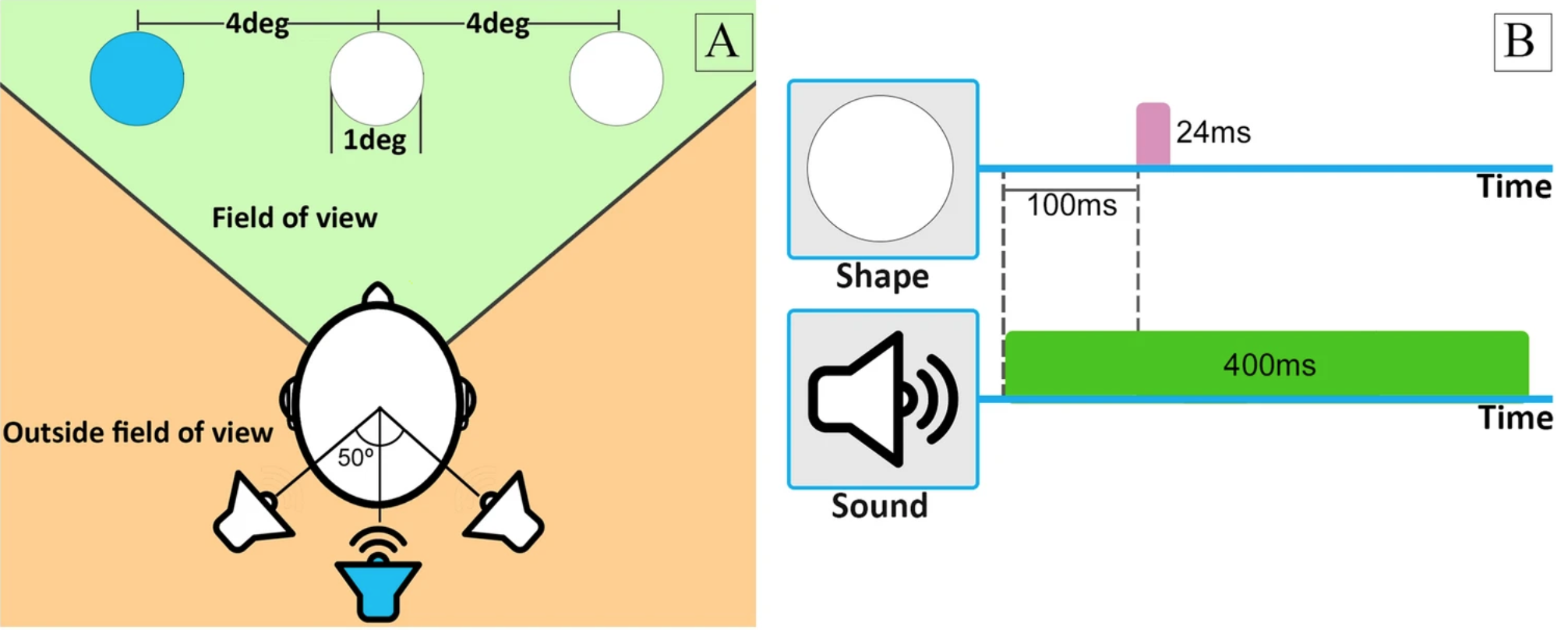

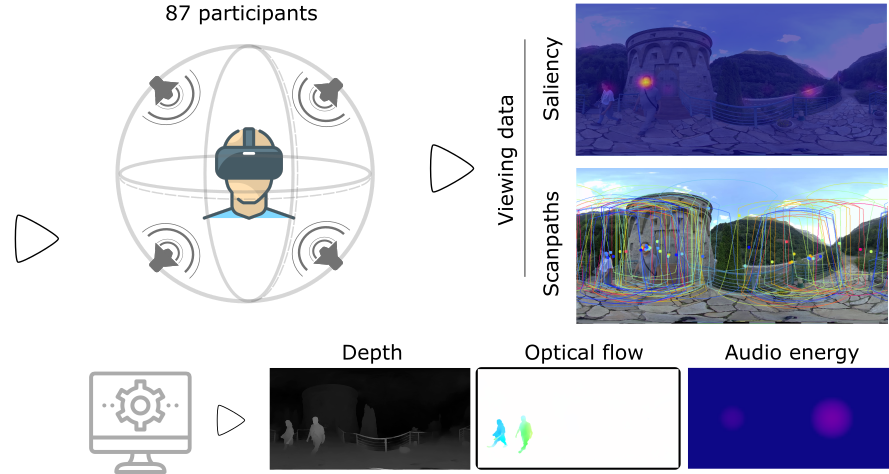

A dataset of eyetracking data captured in a VR audiovisual environment. Participants performed a visual search task while sometimes receiving simultaneous auditory cues. The dataset includes audiovisual cue information and participants' detection and recognition performance.

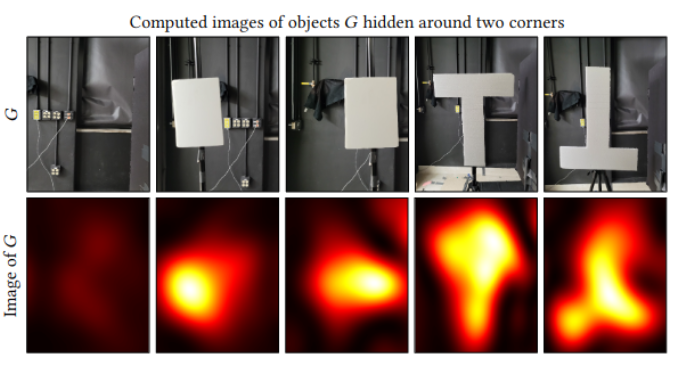

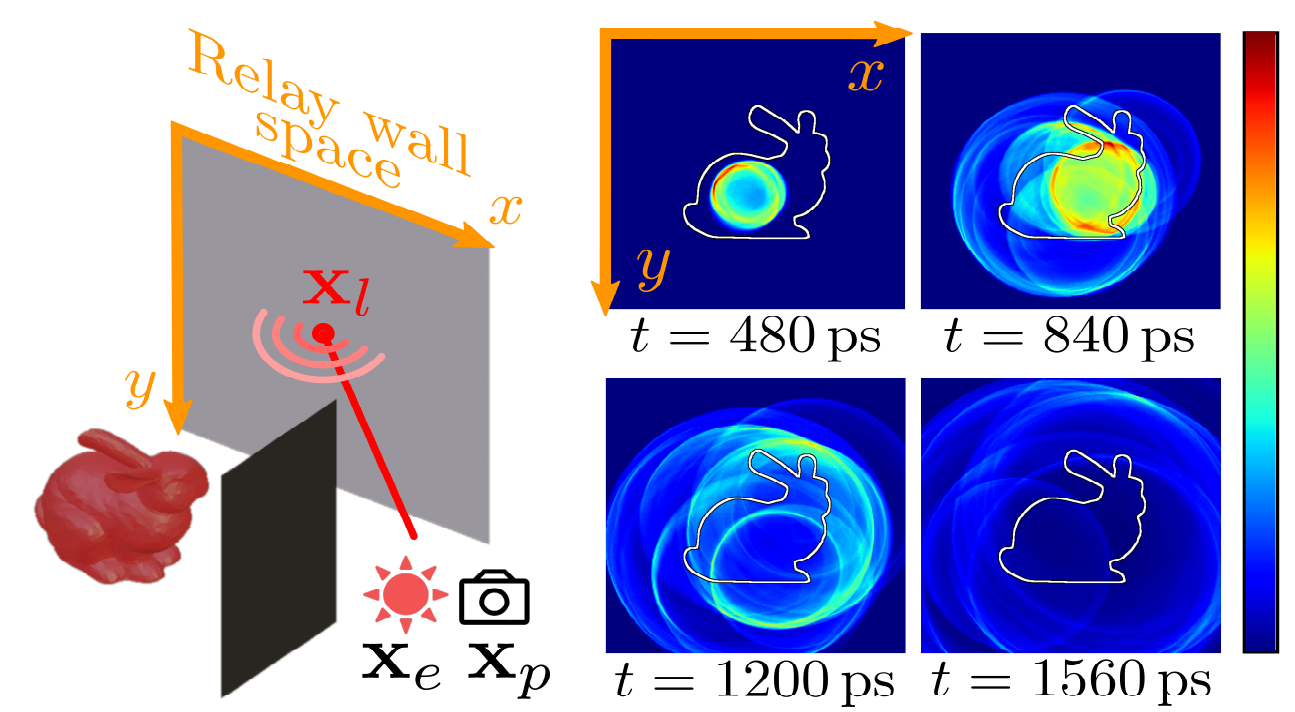

mitransient is a library that extends the Mitsuba 3 path tracer with support for transient simulations, including polarization tracking, differentiable transient rendering, frequency-space rendering, and non-line-of-sight (NLOS) data capture simulations.